Streamlining ERP Master Data: Proven Solutions for Complex Data Processing

Why Master Data Degrades in Complex Supply Chains

Data degradation in an ERP system begins right on the operational floor. The combination of intense operational pressure and fragmented incoming data streams creates the perfect storm for compromised data quality. Logistics chains run on speed. When employees are forced to manually enter hundreds of shipping documents, invoices, and customs forms every day under strict deadlines, blind spots are inevitable. For sustainable business operations, accurate data processing – DataMondial is essential to nip these errors in the bud. In high-pressure environments, the primary goal quickly shifts from accurate input to speedy processing, simply to keep the physical logistics moving.

These structural compromises in ERP data processing quickly lead to duplicates, typos, and omitted fields. What starts as an operational ‘workaround’ in the planning department soon balloons into a fundamental boardroom issue. Flawed master data results in sluggish, unreliable management information. Ultimately, decisions regarding capacity, procurement, and financial forecasting end up being based on a distorted reality. Effective risk mitigation and cost control demand a system where the source data is entirely accurate.

The Variability of Incoming Data Sources

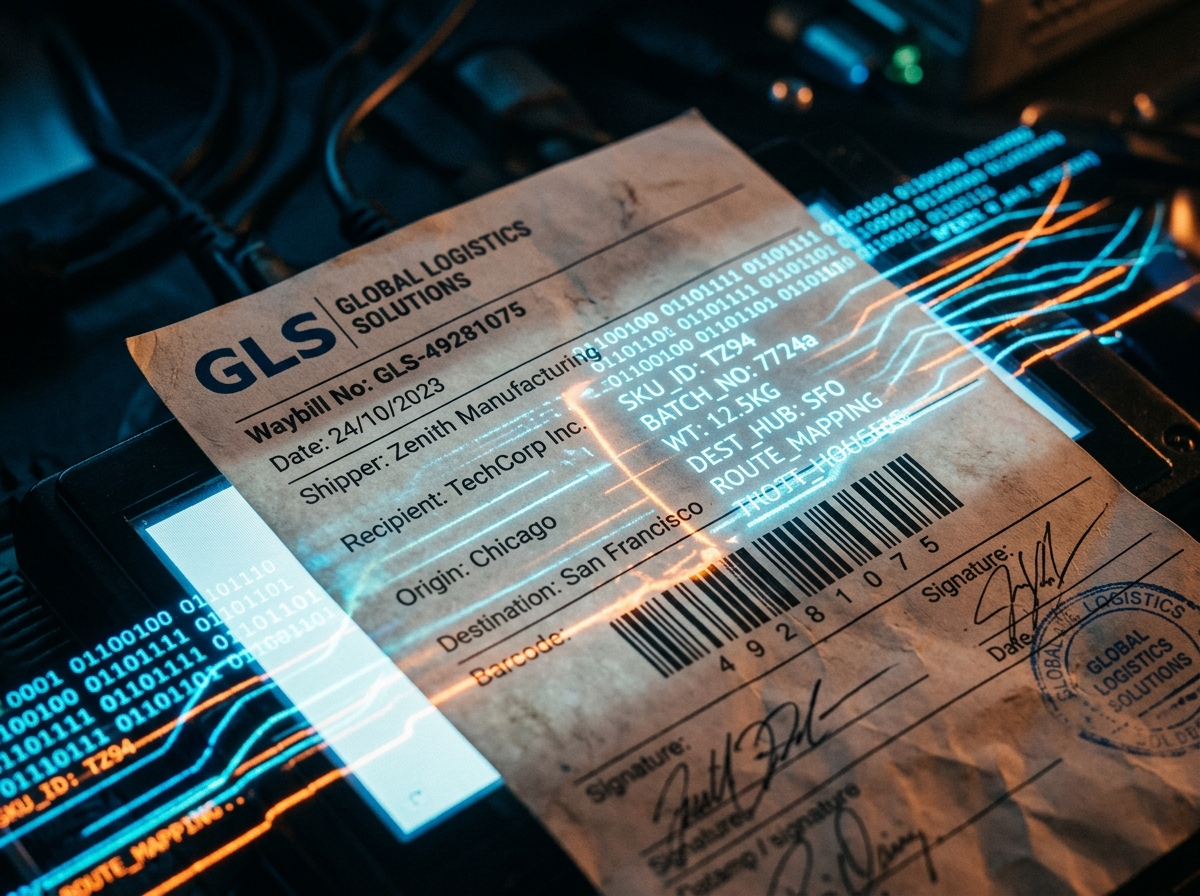

Every link in the supply chain uses its own formatting. Suppliers send PDF invoices with entirely different layouts. Ocean freight forwarders communicate via unstructured emails. Customs portals demand specific XML or EDI integrations, while drivers hand in paper waybills (CMRs) down at the terminal.

Modern Warehouse Management Systems (WMS) or Enterprise Resource Planning (ERP) platforms are built on rigid, relational data structures. The variability of these external sources directly clashes with strict internal field requirements. Because implementing standardized Electronic Data Interchange (EDI) across the entire supply chain is often unfeasible, translating external sources into internal systems remains a constant obstacle to maintaining clean data structures. This wide variety almost always forces a manual intervention step during document processing or ERP data processing.

The Pitfall of Manual Corrections Under Time Pressure

Logistical standstills translate directly into lost revenue. When a truck is waiting for clearance or a vessel is ready for departure, physical operations take absolute precedence over administrative precision. Back-office teams hurriedly fill in mandatory fields or resort to dummy data just to force a process through the ERP system. While this reactive approach prevents short-term delays, it severely damages the long-term data architecture.

Over time, these quick fixes pile up. The system becomes bloated with inconsistent supplier naming conventions, missing weight specifications, and incorrect currency inputs. The degradation is insidious. Repairing a database containing hundreds of thousands of polluted lines requires hundreds of hours of data cleansing—time that could be saved by ensuring pristine ERP data processing at the initial point of entry.

Solution 1: Full Automation via RPA

Deploying Robotic Process Automation (RPA) aims to eliminate the human factor entirely. Bots replicate the actions of a human user through existing graphic interfaces. They open emails, download attachments, copy text, and paste it into the correct ERP fields. However, RPA requires a heavy investment during the pre-production phase. This involves meticulously mapping operations step-by-step, writing complex scripts, and building error-handling protocols.

Software-based solutions function optimally within strictly defined rules. While scalability is a primary feature of bot infrastructure—since the marginal cost of processing an additional document is exceptionally low once programmed—the complete reliance on highly structured data acts as a hard limit. The moment incoming variables fall outside the pre-defined parameters, the automated process grinds to a halt.

Operational and Financial Benefits at Scale

RPA processes predictable, repetitive data streams faster than any back-office team ever could. A bot works without breaks and never makes a typo, provided the source data remains legible and structured. For organizations that receive thousands of standardized documents daily—like electronic purchase orders adhering to fixed XML structures—full automation immediately drives down operational costs.

The Limits of RPA with Unstructured Logistics Data

Automation fails when faced with anomalies. Every day, logistics companies receive packing slips with handwritten notes, scanned PDFs covered in coffee stains, or invoices from suppliers who suddenly changed their template layout. RPA systems simply cannot interpret this unstructured data. At the slightest deviation, the bot throws an ‘exception error’, kicking the file over for manual human intervention anyway. In many instances, this recovery workflow costs more time than if an employee were to handle the complex document manually from the start.

Solution 2: Scaling the Internal Back-Office Team

Hiring additional personnel to solve local capacity issues is a common corporate reflex. Keeping data entry and back-office processes in-house provides a tangible sense of control. Your internal workforce possesses specific domain knowledge and understands your client niche intimately. Human validation naturally mitigates the unpredictable nature of forwarding documents. An employee can parse the context of a vague email and instantly spot errors on a cargo manifest that a bot would completely blindly process.

In practice, however, companies run into harsh market realities. Ongoing labor shortages in the logistics sector severely limit expansion capabilities. Recruitment drives for administrative staff often drag on for months, a direct symptom of the structural scarcity in today’s job market.

Flexibility Through Direct Local Communication

Local teams can pivot quickly when confronted with complex file exceptions. A back-office clerk can simply walk over to a customs declarant or a planner’s desk to clarify an ambiguity. This direct communication facilitates immediate flexibility. Aligning with colleagues and jointly correcting data ensures local context is preserved—especially critical for urgent shipments missing proper documentation.

Current Barriers: Recruitment and Data Fatigue

Cost-intensive recruitment cycles stunt business growth, while salary expectations continue to rise due to labor scarcity. Furthermore, those who are hired for repetitive, standardized data work often experience “data fatigue” in the short term. Transcribing and validating freight information eight hours a day causes mental exhaustion and saps motivation. Paradoxically, these factors lead directly to high staff turnover and fresh margins of error within your ERP database due to lost concentration.

Solution 3: Hybrid Data Processing via EU Nearshoring

Business Process Outsourcing (BPO) offers a rational alternative to the capacity dilemma, provided it is structured correctly. The hybrid model uses automation and AI as the first line of defense, pairing them with the cognitive power of highly educated professionals for validation and exception handling. An effective BPO solution for master data deliberately positions these human validation teams in cost-efficient European hubs (such as Romania).

Unlike traditional offshore routes to Asia, nearshoring strictly within Europe guarantees 100% EU compliance and full adherence to rigorous GDPR frameworks regarding privacy and data protection. Partnering with a BPO requires an initial transition period for process mapping and system setups. From there, the model facilitates fluid scalability. The nearshore team absorbs seasonal spikes in freight volumes seamlessly, generating zero administrative pressure on your internal payroll.

The Synergy Between Technology and Human Quality Assurance

Standalone automation fails when aiming to process 100% of complex logistics documents. The hybrid methodology specifically targets this gap. OCR and AI handle the heavy lifting by extracting usable data from incoming sources. Dedicated nearshore teams then manage the remaining exceptions, validating the software’s output and completing missing logistics fields utilizing their insight and experience. This setup sharply minimizes operating costs while achieving a Data Accuracy benchmark that neither standalone software nor internal teams can reliably maintain on their own.

Checklist: Is Your Supply Chain Data Suitable for Nearshoring?

Certain indicators reveal whether an EU-based BPO model will be effective for your specific ERP databases:

- You process a structurally high volume of physical and digital administrative documents.

- The incoming data exhibits highly variable structures (e.g., varying formats per supplier).

- Protecting corporate data demands unconditional adherence to European privacy regulations (GDPR).

- You require processing and validation within the same time zone, covering multiple European languages.

- Your business growth naturally results in peaks and valleys in data volume.

Decision Framework: Which Strategy Fits Your Organization?

Choosing an effective strategy to manage your master data requires a hard look at two main pillars: data volume and document complexity (structured vs. unstructured). Smaller organizations with low document counts that rely heavily on informal internal workflows actively benefit from keeping operations local. However, when volumes scale up and start creating operational bottlenecks, companies are forced to choose between a technology-only route or a hybrid QA strategy anchored in Europe.

Comparative Table: Strategies for ERP Data Management

| Strategy | Implementation Time | Data Quality Assurance | Cost Control |

|---|---|---|---|

| RPA (Full Automation) | Long (complex setup) | Moderate to high (stalls on exceptions) | Highly efficient for high, structured volumes |

| Scaling Internal Team | Long (slow recruitment processes) | High, but vulnerable to fatigue | Low (high personnel costs and retention pressure) |

| Hybrid Nearshoring (EU) | Medium (process mapping and transition) | Structurally high (includes human validation) | High (flexible capacity, lower operational costs) |

Determining Strategy Based on Logistics Volume

Organizations processing millions of data points face a clear choice. Exceptionally high volumes of 100% structured data demand a pure software solution via RPA. If volumes remain low and require tight, ongoing collaboration with the warehouse floor, the advantage of a dedicated local team holds the most weight. But what if you handle a mid-to-high volume of complex, unstructured logistical paperwork—like handwritten waybills and customs documentation in endlessly varying formats? Under those conditions, an EU-based hybrid nearshoring model guarantees reliable results.

Opt for robust, accurate master data to protect your business continuity without sacrificing your operational agility. Discover how DataMondial’s hybrid BPO framework can relieve your core operations through secure, dependable ERP data processing directly from Romania. When your goal is to guarantee system accuracy, professional data processing – DataMondial is the most logical next step. Reach out to our data specialists for a no-obligation consultation and find the optimal efficiency strategy tailored for your organization.