Uncovering Cost Leaks: The Impact of Inconsistent Data Structures in Your Procurement ERP

How Incorrect Item Data Disrupts the Payment Cycle

Operational delays and direct financial losses in supply chains often stem from seemingly minor discrepancies in master data. A single typo in the procurement department can create a cascade of roadblocks in financial administration and on the shipping floor. The foundation of a streamlined procure-to-pay (P2P) process requires the data on a purchase order to perfectly match both the incoming invoice and the physical goods receipt. As soon as this three-way match fails, the payment cycle grinds to a halt. Professional data processing is therefore essential to ensure business continuity.

A frequent disruption arises from differences in recorded Units of Measure (UoM). A purchase order might be created in the ERP system based on individual pieces, while the supplier bases the corresponding invoice on pallets or colli. The system flags a data mismatch and blocks the automated payment run. Overcoming this roadblock requires time-consuming human intervention. Financial professionals must manually open the file, consult the procurement department regarding the agreed-upon units, communicate with the supplier, and manually correct the conversion factor in the system. These incidental actions drive up the operational cost per processed invoice, extend turnaround times, and result in missed early-payment discounts.

In transshipment and transport, inaccurate item data translates directly into financial liabilities. Entering an incorrect weight or volume in cubic meters (CBM) into your master data immediately compromises load planning. If the system lists an item at 0.5 CBM instead of its actual 0.05 CBM, the planning software will calculate that a shipping container or truck is full, when in reality, it remains largely empty. Unwittingly, the company ends up paying to ship air.

The Domino Effect of CBM and Weight Mismatches

An administrative typo in the CBM or weight fields of your master data triggers a costly domino effect. Suboptimal container loading inflates transport invoices per shipped unit. Conversely, if the volume data is too small, insufficient transport capacity is reserved. In this scenario, goods are left behind on the loading dock. Stranded inventory forces ad-hoc emergency measures, such as booking expensive express or air freight shipments just to meet contractual delivery deadlines. Consequently, transport costs soar well beyond the margins originally calculated in the procurement ERP.

Why ERP Implementations Mask the Data Problem

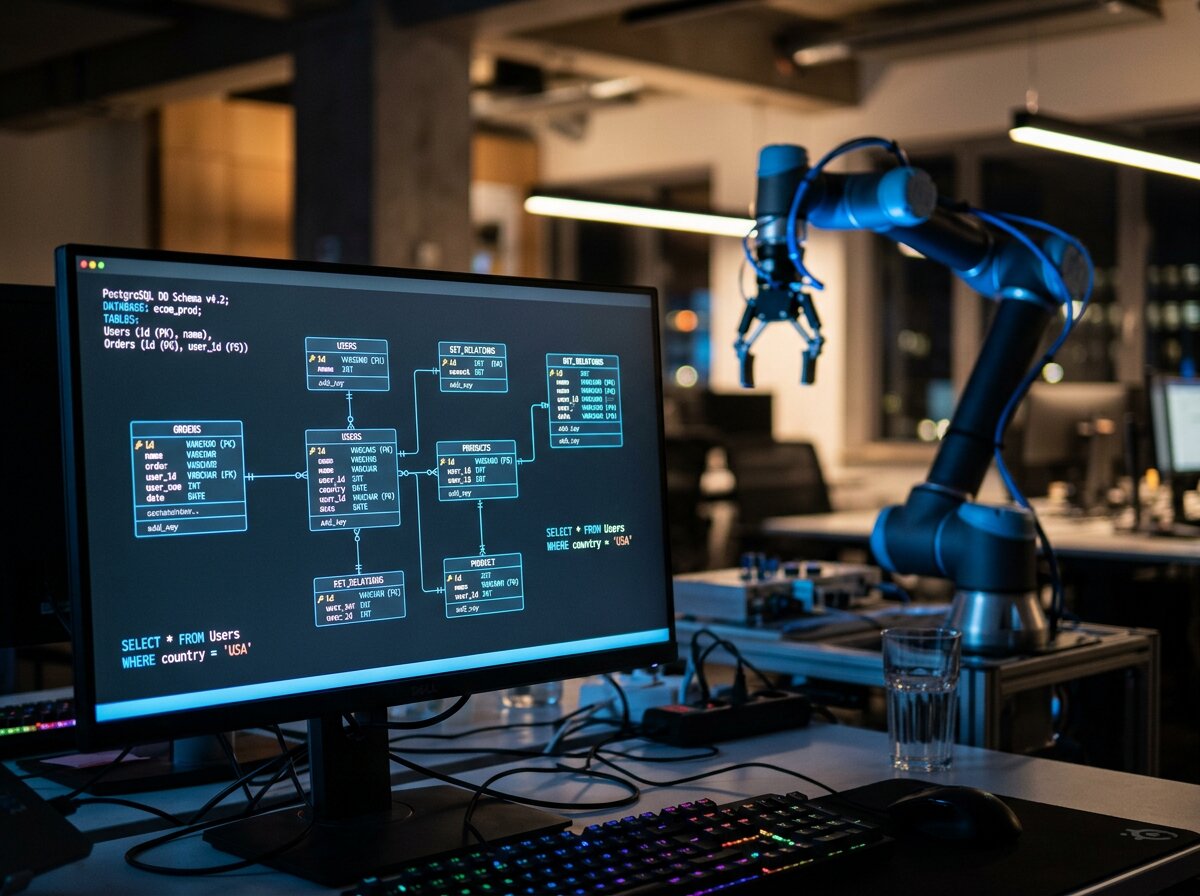

Deploying new software or process automation does not inherently improve data quality. Organizations often mistakenly treat an ERP migration as the ultimate fix for data issues. In reality, a new system simply modernizes historical data pollution and moves it between departments much faster. The focus must shift from the software package itself to the underlying, human-driven data entry processes.

Manually transferring and entering data under time pressure in a dynamic supply chain inevitably leads to input errors. The utopian vision of 100% flawless manual entry—without rigorous system validations and objective human quality control—paves the way for failing business processes. Companies that invest heavily in automation solutions while entirely skipping master data cleanup are essentially building a highly sophisticated shell around a rotten core. Faster processes only generate errors faster when the raw input is corrupt.

The Blind Spot of Robotic Process Automation (RPA)

Automation technologies like RPA (Robotic Process Automation) are programmed to execute transactions at lightning speed based on predefined rules. However, a software robot’s critical blind spot is its utter inability to question the logic of polluted source data. When your master data contains an incorrect pricing tier or a mistaken currency code, the RPA bot effortlessly automates the execution of those flawed instructions. An RPA implementation will repeatedly stall or systematically generate procurement errors until a tightly governed and harmonized database is in place. Achieving true Data Accuracy is a non-negotiable prerequisite before automation can deliver real returns.

Tangible Margin Loss Due to Polluted Supplier Profiles

Fragmented supplier profiles cause direct procurement losses and leave strategic decision-making rudderless. Over the years, duplicate profiles for the exact same supplier frequently emerge within logistics and procurement ERPs. A company might place orders with ‘Supplier X’, but invoices are also booked to ‘Supplier X Ltd.’ or ‘Supplier X International’. Because of this fragmentation, the actual purchasing volume is artificially split across multiple entities in the system.

This split prevents procurement departments from unlocking volume discounts and rebate thresholds. A contractual discount that kicks in at 10,000 units won’t trigger if the system mistakenly records 6,000 units under profile A and 4,000 under profile B. The organization continues to purchase at outdated rates that no longer reflect market value—simply because the recorded volume cannot be leveraged during contract negotiations. Furthermore, polluted data directly corrupts C-level spend analysis. A CFO or COO ends up basing cost-control measures on reports that paint a falsely fragmented picture of outgoing cash flows.

Three Indicators of Distorted Logistics Reporting Caused by Data Inconsistency

- Undefined residual categories in spend analysis: When management reports show a substantial percentage of procurement expenditure categorized as ‘miscellaneous’ or ‘other’, the supplier profiles are missing the correct master data categories or product codes.

- Structural manual corrections on transport invoices: A high frequency of negative variance alerts between pre-calculated freight rates in the ERP and the actual forwarder’s invoice points to systematically incorrect weight or volume units in the master data.

- Anomalies in supplier consolidation: Analytics reports showing unusually low order values scattered across an abnormally high number of suppliers strongly indicate the presence of multiple, duplicated profiles for the exact same physical trading partner.

The Limitations of Internal Cleanup Efforts

Putting out ad-hoc fires via the internal logistics or finance team is an ineffective way to solve master data problems. Back-office employees spend hours every week managing incidents on the sidelines. They will unblock a stalled purchase invoice or manually correct a load list for one specific shipment. Unfortunately, the source data—the item or supplier profile within the ERP—remains entirely untouched. On the very next purchase order or transport booking, the exact same problem reoccurs. Internal teams lack the bandwidth to structurally harmonize thousands of SKUs (Stock Keeping Units) because daily operational demands will always take precedence.

Launching a massive, retrospective project to clean up every single data field in the entire system is highly unprofitable. A database typically houses many outdated items or suppliers that have been inactive for years. This demands a highly targeted approach to streamlining Master Data in ERP systems. By applying focus management exclusively to active master data and critical dimensions (such as price, weight, volume units, and supplier IDs), you rapidly accelerate Return on Investment (ROI). This pragmatic approach separates the ad-hoc treatment of symptoms from structural, strategic data management. It establishes a pristine foundation for data-driven processes and organizational scalability.

Accurate data structures form the bedrock of scalable, automated, and profitable supply chains, whereas erroneous data drives up direct costs in transport and procurement. Attempting to resolve historical master data pollution internally often lacks both focus and capacity. This calls for external, targeted stewardship over Data Accuracy. As your BPO partner, DataMondial takes this structural management of data processing entirely off your hands through a secure, EU-compliant nearshoring structure. Contact us via our website or explore how we can streamline Master Data in ERP systems to discover how we efficiently optimize your back-office processes and data structures—without placing additional strain on your internal teams.